With the release of vSphere 5.5, it’s inevitable there will be a lot of blogging by vendors comparing their products to vSphere. That is expected and not surprising. It’s the job of vendor “Evangelists” to do this.

Apologies for the length of this post in advance.

I am a freelance consultant – I have done quite a lot of work with vSphere and hope to do more. I also fully expect to be working with, and coming across Hyper-V a lot in the future. So the opinion expressed here is personal and not tied to any vendor – I do not rely on VMware directly for my work. In fact the last project I worked on was a new Hyper-V implementation where a customer decided to go that route.

I have used Hyper-V 2012 in my lab and while I was impressed with many of it’s features, and the improvements therein, I found installing and configuring it more difficult and time consuming than vSphere. I have one or two customers who have had similar experiences.

Part of this is probably learning curve, and part due to design. But I do assume I will be working with it again soon.

Scrutinizing Vendor-Provided Data Points

However, it annoys me when people, either directly, while representing vendors or with very close affiliations, present incorrect information. A lot of customers take information on the internet from Internet Powerhouses at face value so it should be accurate, even allowing for a little “bias”.

In my experience, by and large, VMware does not attack Microsoft – their product evangelists focus on providing information on their own products to support their customers. The VMware community is massive and it’s all about sharing information, with everyone, all the time. It’s one of the most impressive things I have seen. I recently attended the Dublin VMware User Group (VMUG) where a senior VMware Technical Marketing Architect provided a comparison of Hyper-V and vSphere. He was fair and honest about where both products were strong. It was refreshing to hear someone so well known (Top-10 vSphere blogger) being so up front.

Case in Point: Microsoft's vSphere 5.5 vs. 2012 R2 Hyper-V Comparison

Let’s take a look at this following blog from Keith Mayer. Keith is a Senior Microsoft Evangelist. I just had to write a post on this having engaged with Keith who is a nice guy BTW. I’ve been in a back and fort conversation with Keith on Twitter. Keith has some very useful information on this site which I suggest you check out. However I wish to comment on this blog entry, entitled "VMware or Microsoft? Comparing vSphere 5.5 and Windows Server 2012 R2 Hyper-V At-A-Glance"

I want to call out a few elements on this blog which I believe are not correct. Ultimately make your own mind up and do your own homework rather than take my word for it. The blog compares Hyper-V 2012 R2 and vSphere 5.5.

Hyper-V is on the 2nd column from the left and vSphere third from the left.

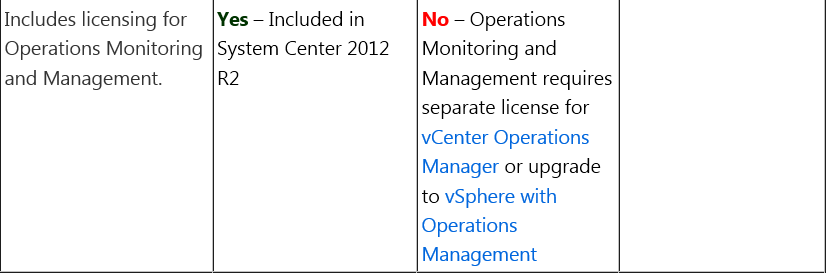

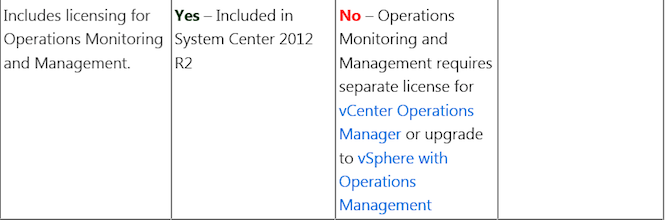

Monitoring & Management

First inaccuracy:

This neglects to mention one thing, and that is that vCenter (normally used by most customers using HA/clustering) is an “Operations Monitoring” and “Management” platform.

This neglects to mention one thing, and that is that vCenter (normally used by most customers using HA/clustering) is an “Operations Monitoring” and “Management” platform.

In fact most people manage ALL of their vSphere environment through vCenter – not vCOPs. Well, not yet anyway.

vCenter has all the performance and monitoring data, since installation, for the entire vSphere environment being managed, The separately mentioned product (vCOPs) extracts data from vCenter to build it’s performance and inventory schema/view. For advanced event correlation/Analytics you can use vCenter Operations Management (vCOPS), but this is also offered as part of a bundled offering: vSphere with vCenter Operations Management – so it is not strictly speaking always seen as a “separate” license.

Secondly, are Microsoft giving away System Center 2012 (VMM) for free ?. I tried to download it just now, and it only offered me an “Evaluation”. See below.

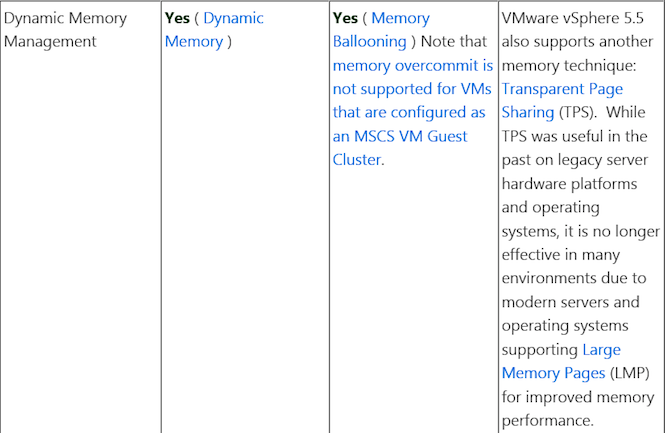

Memory Management

Next one:

I don’t agree with this. To say TPS is useful on “legacy” server hardware platforms is nonsense. TPS is very effective and results in very significant memory savings, for customers. It also helps to reduce resource duplication. Large page support needs to be manually setup in vSphere, which shows how it is not a “standard” feature. However vSphere supports both scenarios, and Vmware guidance is to use it depending on the application.

I don’t agree with this. To say TPS is useful on “legacy” server hardware platforms is nonsense. TPS is very effective and results in very significant memory savings, for customers. It also helps to reduce resource duplication. Large page support needs to be manually setup in vSphere, which shows how it is not a “standard” feature. However vSphere supports both scenarios, and Vmware guidance is to use it depending on the application.

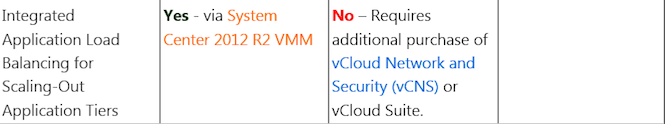

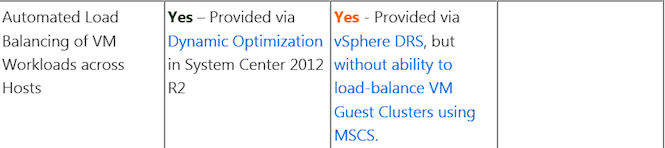

Application Load Balancing

Next One:

Is System Center 2012 R2 VMM free ? This seems to indicate that you have to pay for vCNS but not for SCVMM. It would certainly appear from Microsoft’s website (just now) that this is not the case. Note the term “Evaluation”. If free – why is it only available for Evaluation ?

Migration Options

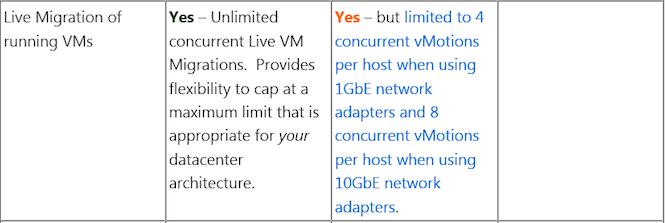

Next one: This is a particular hobby horse of mine:

Now people need to be very careful when reading things like this. I have engaged in conversation with Keith on this topic and he has admitted that this feature is designed to allow customers to create their own “cap” on the maximum number of concurrent operations. (He did change his blog to be fair.)

However, you should know that if you use DRS, vSphere performs a cost-benefit-risk-analysis calculation before migrations, and “allows” a cost of 30% of a CPU-core for a single vMotion Operation on 1Gb/s adapter, and 100% of a CPU core for a vMotion on 10Gb/s adapter.

That shows what DRS’s algorithm expects in terms of used cycles for a vMotion operation. This is conservative and based on engineering know-how and experience. Keith’s feels this is not so much of an issue due to the scalability of modern hardware and did accept that this is an “engineering” limit i.e. VMware has taken a more conservative approach whereas Microsoft allow you to run as many as you like. VMware could take the handbrake off but chooses to bake limits like this to protect the stability of customers’ environments. Otherwise why don’t they say “unlimited” too ?. Make your own mind up.

There is quite a bit of commentary on Microsoft Cluster support on vSphere and Hyper-V superiority in that regard. That’s true, and it is what you would expect. Same-vendor interoperability is always greater than interoperability BETWEEN vendors.

However… Remember that 99.99% of customers don’t use MSCS on vSphere or Hyper-V, and most of them want to move away from the complexity of MSCS, Veritas Cluster and other clustering frameworks to platforms like vSphere (and Hyper-V), for the inherent features in the platform i.e. HA. That was one of the reasons for the success of ESX in the first place, as well as vMotion.

A customer I worked with had a functional requirement for their virtualization project, which was to decommission all existing physical Microsoft Clusters due to higher management complexity. Not because they weren’t reliable, or that the customer wasn’t happy – They were, but MSCS did introduce complexity, like any clustering solution.

So this one is a little bit irrelevant in my view. Even products like Exchange doesn’t use MSCS (it uses DAG).

Make up your own mind on this one too.

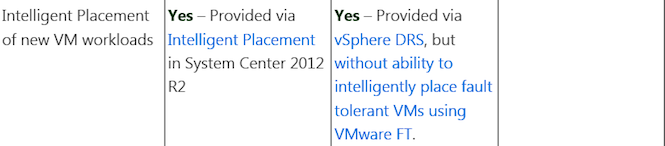

New VM Workload Placement & Load Balancing

Next one:

This is a 0.01% use case. Pretty uncommon and not really that relevant for most customers.

Next one:

Again that Microsoft Cluster qualification: not that relevant for a lot of customers.

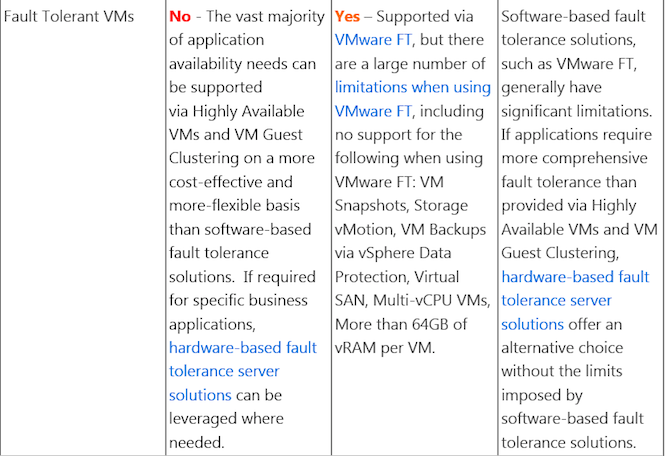

Fault Tolerance

Now we move to Fault Tolerance:

Why is this not just a 'No' for Hyper-V and a Yes for vSphere. To achieve this functionality is a difficult engineering challenge but here, it is only the limitations that are pointed out. There are plenty of “qualifications” with Fault Tolerance, and, mainly due to the single-vCPU limitation, not a huge amount of people use it, but better to stick to the Yes/No.

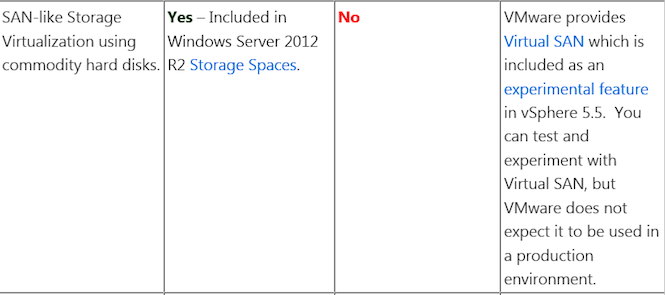

Next one:

VSAN is an experimental product ? VSAN is Beta and will be launched soon. Calling it “experimental” is a bit cheeky. See VMware’s website just now:

Next One:

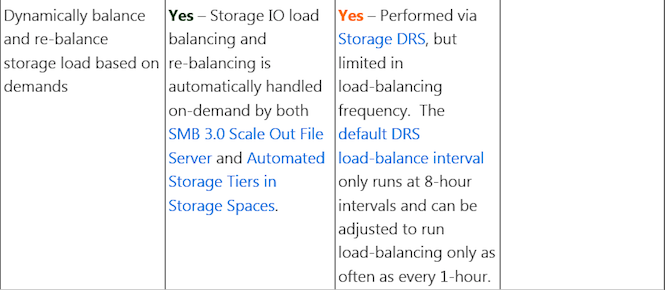

Looks like Storage DRS-like functionality is not available for regular block/NFS storage for Hyper-V, only SMB3.0 and with storage spaces. So if you don’t use storage spaces is it supported ?. It’s not clear to me but make your own mind up.

I think to comment on the frequency of Storage DRS is disingenuous. This is a vSphere design feature to ensure minimal impact on customer environments, and more accurate calculations. It is not an engineering “Limit”. I think it makes a lot of sense that it bases recommendations on data over an extended period of time.

As per Frank Denneman’s blog below.

http://frankdenneman.nl/2012/05/07/storage-drs-load-balance-frequency/

Storage Provisioning & Network Virtualization

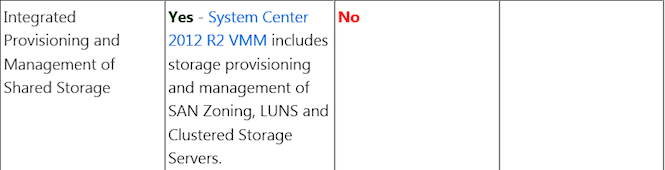

Next One:

There are plenty of storage provisioning “operations” supported in vCenter and also plenty of fully integrated plugins (Hitachi/Netapp/EMC) for vCenter which allow array based activities, like LUN provisioning. And these are fully integrated plugins, that appear as a tab in vCenter.

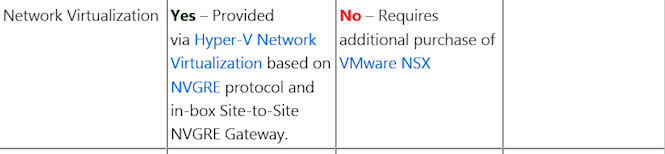

Next One:

It depends on your definition of “network virtualization”. This is a complex topic but this is rather a simplistic commentary re: what is and is not available.

The List Could Go On...

The reason I felt compelled to write this post is that so-called Evangelists are painting a picture which in some cases is not the whole picture.

I think this is a fairer comparison which does call out some benefits of Hyper-V and some benefits of VMware.

Ultimately like all solution design and procurement, it depends on business requirements always.

The big selling point regarding Hyper-V being “free” is not real in my opinion. VMware engages in enterprise license agreements too. Don’t just believe the list price.

Ed. Note: Be sure to check out Scott & David's comments and side-by-side comparison on vSphere vs. Hyper-V (Free and paid versions).

It is only of late that Microsoft has stopped replicating vSphere features (to achieve feature parity), and has started to create their own. From this point forward, all of us benefit form this competition and increased innovation. Competition is good for the the entire IT industry. I have no problem with it.

My take is that hypervisors are becoming commodity and articles like this are simplistic and biased and don’t help the customer. It’s not really that important that one vendor supports 4000 of something, and the other one supports 8000 as typically those limits are not hit. It would be folly were a Virtualization architect to create such a dense design where if one thing falls over 8000 VMs disappear.

Update:

Ed. Note: This post has generated a lot of productive discussion, including feedback from both sides. Paul has posted a followup response to the feedback received, don't miss it!

What Say You?

Make your own mind up and share your take in the comments!

This neglects to mention one thing, and that is that vCenter (normally used by most customers using HA/clustering) is an “Operations Monitoring” and “Management” platform.

This neglects to mention one thing, and that is that vCenter (normally used by most customers using HA/clustering) is an “Operations Monitoring” and “Management” platform. I don’t agree with this. To say TPS is useful on “legacy” server hardware platforms is nonsense. TPS is very effective and results in very significant memory savings, for customers. It also helps to reduce resource duplication. Large page support needs to be manually setup in vSphere, which shows how it is not a “standard” feature. However vSphere supports both scenarios, and Vmware guidance is to use it depending on the application.

I don’t agree with this. To say TPS is useful on “legacy” server hardware platforms is nonsense. TPS is very effective and results in very significant memory savings, for customers. It also helps to reduce resource duplication. Large page support needs to be manually setup in vSphere, which shows how it is not a “standard” feature. However vSphere supports both scenarios, and Vmware guidance is to use it depending on the application.