This is a topic that I have been confused on more than once. I would read the help documentation and the VMware KB article, thought I understood it and later say "wait, what?"

First, let's look at a host...

Analyzing Maximum vSphere vCPUs on a Host

In vSphere 5.1 (I'll update this for future versions), you can have up to 64 vCPUs configured on a virtual machine, if you have vSphere Enterprise Plus (the number goes down as the edition of vSphere is reduced). BUT, you are also limited to assigning a maximum number of vCPUS that your physical server has available in logical CPUs.

Psst...While You're Here, Check Out Our Exclusive FREE IT Training Program:

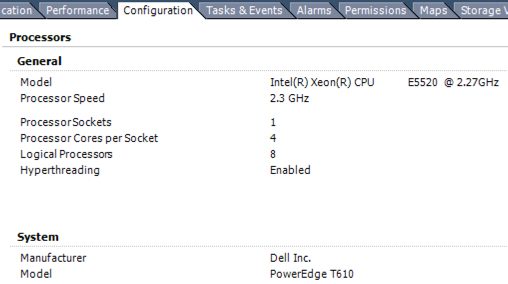

If we take a look at one server in my lab, it's a Dell T610 with a single physical CPU socket that has 4 cores (quad-core) and hyperthreading is enabled, which doubles the number of cores presented, for a total of 8 cores:

What this means is that the maximum number of vCPUs that I could configure for a VM on this host would be 8. Let's verify.

What this means is that the maximum number of vCPUs that I could configure for a VM on this host would be 8. Let's verify.

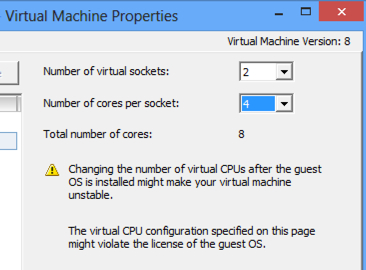

If we edit the settings of a VM on that host, we see that we can either configure it with 8 virtual sockets and 1 virtual core per socket, 4 sockets and 2 cores per socket, 2 sockets and 4 cores per socket, or 8 sockets and 1 core per socket (all of which, if you multiple, totals 8):

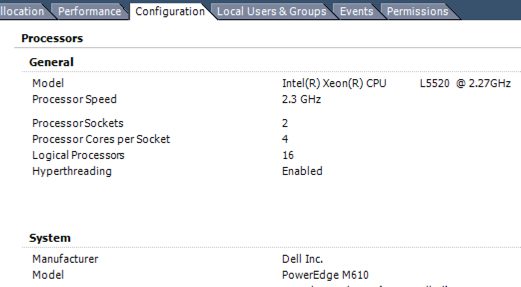

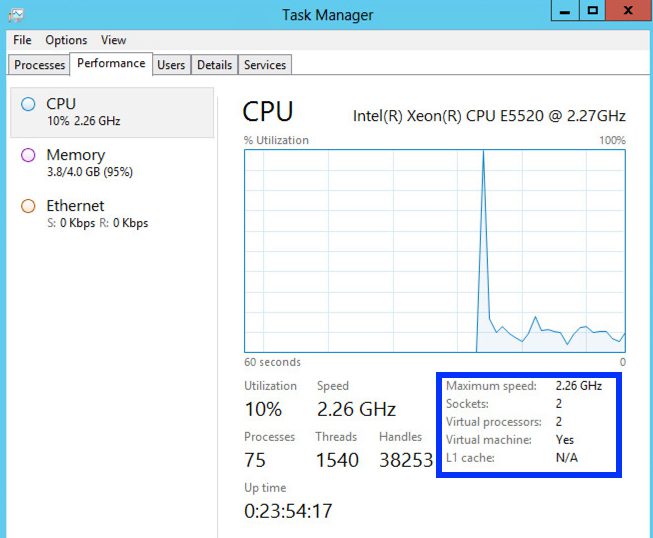

On another host, a Dell M610, I have 2 physical sockets, 4 cores per socket, with hyperthreading enabled, which gives me a total of 16 logical processors:

On another host, a Dell M610, I have 2 physical sockets, 4 cores per socket, with hyperthreading enabled, which gives me a total of 16 logical processors:

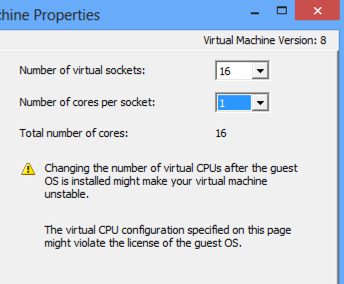

If I look at a VM on that host (note that these VMs need to be hardware version 8 or above), I can configured any combination of virtual cores that total no more than 16 (could be 16 x 1, 1 x 16, 2 x 8, 8 x 2, 4 x 4, etc):

If I look at a VM on that host (note that these VMs need to be hardware version 8 or above), I can configured any combination of virtual cores that total no more than 16 (could be 16 x 1, 1 x 16, 2 x 8, 8 x 2, 4 x 4, etc):

Now that you know the the limitations of the physical hosts and hypervisor, let's look at why this differentiation of virtual sockets vs virtual cores is available and what you should choose.

Now that you know the the limitations of the physical hosts and hypervisor, let's look at why this differentiation of virtual sockets vs virtual cores is available and what you should choose.

The Guest OS Knows the Sockets and Cores

Warning! A very important part of understanding this is that when you configure a vCPU on a VM, that vCPU is actually a Virtual Core, not a virtual socket. Also, a vCPU has been traditionally presented to the guest OS in a VM as a single core, single socket processor.

What you might not have thought about is that the guest operating systems know not only the number of "CPUs" but also the number of sockets and cores that the CPU has available. As Kendrick Coleman shows in his post on vCPU for License Trickery, you can use the CPU-Z utility to find out how many sockets and cores your virtual machine has.

Does it make any difference for the performance of the applications inside if the OS thinks it has 4 sockets and 2 cores per socket or 1 socket with 8 cores? As far as I can tell, NO (but I welcome your comments). The guest OS is just scheduling the threads from each process onto a CPU core and, using the hypervisor, those virtualized threads are scheduled, by the VMkernel scheduler, on a logical CPU core of the operating system.

If it doesn't have any effect on performance, why would VMware even offered this option to specify the number of sockets per core for each VM? The answer is that it's all related to software licensing for the OS and applications.

If it doesn't have any effect on performance, why would VMware even offered this option to specify the number of sockets per core for each VM? The answer is that it's all related to software licensing for the OS and applications.

OS and Application Licensing Per Socket

Many (too many) operating systems and applications are licensed "per socket". You might pay $5000 per socket for a particular application. Let's say that Windows Server 2003 is limited to running on "up to 4 CPUs" (or sockets). Say that you had a physical server with 4 quad core CPUs, for a total of 16 cores and then enabled hyperthreading for a total of 32 logical cores. If you configured your VM to have up to 4 "CPUs", as the license specified, those 4 vCPUs would only run on 4 physical cores. However, if you had of installed that same Windows OS on the same phsyical server, it would have run on up to 4 sockets but, with each socket having 4 cores, it would have offered up to 16 logical cores for Windows (which still not breaking your end user license agreement). In other words, you would get to use more cores and likely receive more throughput.

In the end, what you are doing here is gaining granular control over how many virtual sockets and how many virtual cores per socket are presented to each virtual machine. This way, you can ensure you get the performance you need without having to buy extra licenses and without violating your EULA.

What this means is that the maximum number of vCPUs that I could configure for a VM on this host would be 8. Let's verify.

What this means is that the maximum number of vCPUs that I could configure for a VM on this host would be 8. Let's verify. On another host, a Dell M610, I have 2 physical sockets, 4 cores per socket, with hyperthreading enabled, which gives me a total of 16 logical processors:

On another host, a Dell M610, I have 2 physical sockets, 4 cores per socket, with hyperthreading enabled, which gives me a total of 16 logical processors: If I look at a VM on that host (note that these VMs need to be hardware version 8 or above), I can configured any combination of virtual cores that total no more than 16 (could be 16 x 1, 1 x 16, 2 x 8, 8 x 2, 4 x 4, etc):

If I look at a VM on that host (note that these VMs need to be hardware version 8 or above), I can configured any combination of virtual cores that total no more than 16 (could be 16 x 1, 1 x 16, 2 x 8, 8 x 2, 4 x 4, etc): Now that you know the the limitations of the physical hosts and hypervisor, let's look at why this differentiation of virtual sockets vs virtual cores is available and what you should choose.

Now that you know the the limitations of the physical hosts and hypervisor, let's look at why this differentiation of virtual sockets vs virtual cores is available and what you should choose. If it doesn't have any effect on performance, why would VMware even offered this option to specify the number of sockets per core for each VM? The answer is that it's all related to software licensing for the OS and applications.

If it doesn't have any effect on performance, why would VMware even offered this option to specify the number of sockets per core for each VM? The answer is that it's all related to software licensing for the OS and applications.