Yes. It’s true that your shiny new all-flash storage array’s solid state disks will wear out over time. However, unlike the flash storage of yesteryear, this wear process isn’t nearly as serious as it used to be in the past. This is just one of the evolving facts that litter the flash storage landscape and that can muddy the waters when people are considering adding flash storage to their data centers. The facts that used to be true just a few years ago have changed drastically and people’s understanding of those changes has lagged behind. In this article, I will help you to understand what’s real and not real about modern flash storage so that you can make the best possible decision about your future storage purchases.

“Solid State Disk” and “Flash” Are Basically Interchangeable Terms

The terms solid state disk and flash are often used interchangeably and, for the most part, that’s just fine. However, to those that prefer precision in their grammar, solid state disk generally refers to the physical device – such as a 2.5” form factor with SATA connector – that houses the individual flash modules. When discussing this class of storage, what’s really being discussed is NAND-based flash storage. When describing a storage-laden PCI-e card, people generally say that it’s a PCI-e with flash modules. All that said, don’t get too caught up in the semantics of the thing.

Today’s Flash Is NAND-Based (Negated AND gate)

What is the heck does “NAND-based flash” really mean, anyway? “NAND” refers to the type of logic gate (transistor gate) that is used for data storage in the device. NAND is a nonvolatile type of memory, which means that data stored on such devices is retained even once power to the device is discontinued. Compare this to the RAM in your computer, which is volatile. When the computer gets turned off, the data is no longer able to be stored in RAM. This is one of the reasons that it takes computers with traditional hard drives a bit to boot. All of the operating system files have to be copied from the hard drive into volatile RAM in order for the system to function. NAND-based flash devices are broken up into individual cells.

�The logic gate performs a Boolean function in order to determine the contents of a flash-based cell. Using what are called the floating gate and the control gate, the system determines how many electrons are in each cell. When there are no electrons present, the cell is considered to be empty. When there are electrons present, the cell has data. This is basically a voltage check that is performed on a per-cell basis. Different kinds of flash storage measure a cell’s voltage in different ways.

Watch: The History of Flash Memory & Storage: Interview with Flash Memory Pioneer Frankie Roohparvar, COO, Skyera

There Are Multiple Kinds Of Flash Storage Available

When considering purchasing flash storage for a data center, administrators need to understand that there are different kinds of flash storage from which to choose. Each type of flash carries with it different costs and characteristics that make each suitable for differing purposes.

Single Level Cell (SLC)

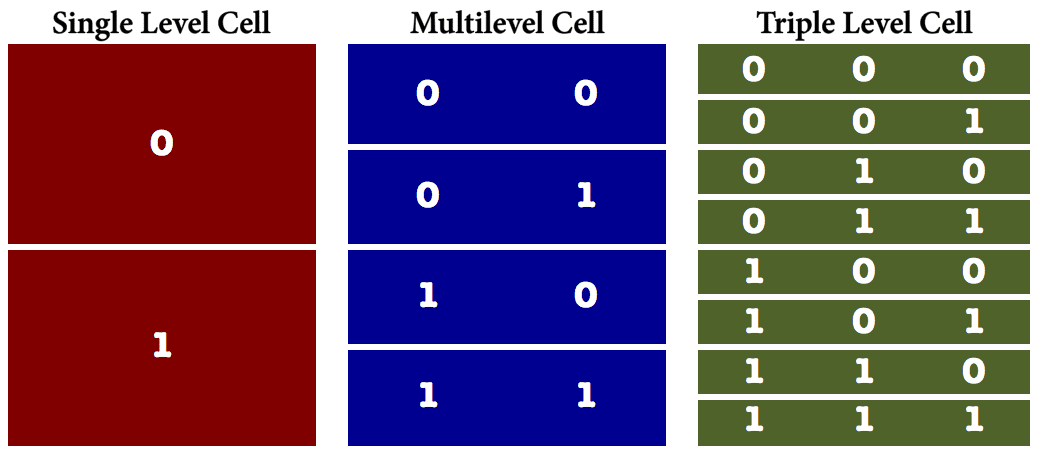

When it comes to raw read performance, single level cell (SLC) flash storage is at the top of the charts. Part of the reason is that fact that a cell only has one of two easy-to-read states: On or Off. The cell is considered On when electrons are present and Off when they are not. On the downside, though, since SLC storage allows only the on and off state, SLC carries the least capacity capability of the various kinds of flash storage.

Multi-Level Cell (MLC)

Recognizing the need for more capacity-dense storage, the solid state storage market responded with the introduction of Multi-Level Cell (MLC)-based storage. Rather than just a simple Yes/No value as is the case with SLC, MLC-based devices can store multiple states per cell – generally up to four. This fact enables MLC storage to carry significantly more storage density than is possible with SLC devices. However, this capacity gain comes at the expense of overall performance. Because MLC devices can have many different voltage states – which is what determines that cell’s value – it takes longer to determine the exact value for each cell. MLC storage can’t withstand the number of erase/write cycles of other kinds of flash storage such as SLC and eMLC.

This kind of MLC is sometimes referred to as commodity MLC storage.

Enterprise MLC

Where there is commodity hardware to be found, there will also be premium hardware lurking about. In this instance, that premium offering consists of what is known as Enterprise MLC flash storage (eMLC). eMLC storage has tighter voltage tolerances over MLC and can generally withstand far more erase/write cycles than regular MLC. As such, it is often used when there will be dramatic erase/write operations taking place since it lasts longer than regular MLC. That said, many storage vendors today simply rely on the much less expensive consumer grade MLC storage type and use a software layer to protect data in the long term.

Watch: Flash Storage Types Explained: SLC, eMLC & MLC with Pure Storage Software Architect Neil Vachharajani

Triple Level Cell (TLC)

As the name implies, triple level cell, created by Toshiba back in 2008, is an evolution of solid state storage technology that continues to address the capacity disparity between flash and spinning disk storage types. TLC flash provides additional voltage levels and more bits per cell, which enables more data to be stored in each cell. As such, the cost per GB of TLC storage is far less than SLC and MLC. There is, however, a tradeoff and, again, that comes at the expense of performance because of the additional time that it takes to ascertain a cell’s values.

Wear And Tear Is A Real Phenomenon

It’s no secret that solid state storage eventually wears out and fails. In fact, for a period of time, this fact was a major hurdle and, along with very high cost, prevented failure-adverse enterprises from adopting the technology. The reason that flash storage eventually fails is due to the way that the storage needs to be prepared when new data is written to it. Before a cell can be populated, it must first be erased. This erasure process has a negative impact on the storage itself and each cell can only withstand a certain number of erasures before that cell ultimately becomes unusable.

In the old days, it was possible for a single area of the disk to be subjected to constant read/write cycles, ultimately leading to the failure of portions of the storage with associated loss of data. The fact that NAND-based storage fails has not changed. What has changed, however, are the controllers that manage the erase and write cycles to which the storage medium is subjected. Today, almost every reasonable solid state storage device implements a technique known as wear leveling. Wear leveling randomizes the write process so that no one area of the device is constantly impacted. Consider a scenario in which a device with 100,000 cells is subjected to 1 million erase/write cycles. Without wear leveling, a single cell could get all 1 million cycles. With wear leveling, theoretically, each cell would only be subjected to 10 erase/write cycles. Wear leveling has significantly extended the overall life of solid state storage. Along with declining cost for the storage, wear leveling is one of the single biggest factors that has contributed to enterprise adoption.

The Rise Of Flash Storage Has Shifted The Primary Cost Metric

In many traditional environments, the primary – and sometimes only – metric for storage procurement was $/GB. In other words, how much raw capacity can I cram into my budget? There have always been variations in this figure between different kinds of hard disks. For example, 7200 RPM SATA disks almost always have a much lower cost per GB than 15K RPM SAS disks. However, this differentiation only comes into play as storage performance starts to become a factor in the procurement decision.

In recent years the storage performance issue has become a prime factor in many new storage purchases, particularly as virtualization and more I/O intensive workloads have become the norm in the data center. Today, it’s not unusual to see two metrics associated with new storage purchases: $/GB (or $/TB) and $/IOPS. In some application use cases, the $/IOPS metric might even outweigh $/GB. Because solid state storage can drives thousands, hundreds of thousands, or even millions of IOPS, when it comes to the performance metric alone, when compared against traditional hard drives, solid state storage is incredibly affordable.

Flash Storage Can Be Applied In Many Architectural Locations

Perhaps one of the biggest decision points when it comes to flash storage is deciding where in the architecture to place the technology so that it provides the biggest positive impact on storage performance.

Server-side

Server-side storage is becoming a really interesting space as vendors look for ways to improve overall storage performance and, in some cases, make attempts to rework the entire data center. This article focuses on just storage, though, and not on converged architectures that seek to unite compute and storage into a single appliance.

SATA/SAS disks

For anyone that’s ever opened up a computer, this storage form factor is familiar. This is simply a SATA or SAS 2.5” or 3.5” disk that is used to store data. When used as a part of a broader storage architecture, this local storage can be configured as a massive cache designed to accelerate storage performance, or it can be used as a high-speed local storage repository.

PCI-e

As is the case with SAS/SATA disks, PCI-e storage is often used as a way to accelerate existing storage systems by acting as an intermediary between servers and storage arrays. These devices provide both read and write caching capabilities and can enable organizations to retain existing legacy storage systems while, at the same time, solving performance issues that maybe inherent in these systems. PCI-e flash devices are often among the faster and less latency-prone storage solutions thanks to its location right on the system bus.

DIMM

More recently, newer vendors have begun looking for ways to drive latency figures even lower than is possible with PCI-e cards. One way to do this is to move storage as close to the processor as possible. A server’s DIMM sockets, generally used for system memory, is about as close as one can get to the processor without building storage right into the processor itself. DIMM-based flash storage provides latency figures that can be in the low microseconds, making this kind of storage a perfect fit when time is of the essence.

Watch: 400GB on a DIMM with Kevin Wagner from Diablo Technologies

The primary challenge around DIMM-based storage is the fact that systems require BIOS-level changes to be made in order to have the ability to select whether a DIMM socket is being used for RAM or used for system storage. However, some server vendors are beginning to include these settings in their newer servers.

Array-Based

Although server-side storage has become a real contender and enables new kinds of storage opportunities, storage arrays have certainly not fallen by the wayside. In fact, there are all kinds of vendors in the array-based storage market, each working hard to solve what they consider to be the most pressing storage challenges faced by organizations. With legacy storage, almost all arrays used spinning disk. In today’s flash world, two new kinds of arrays have emerged as performance (and sometimes capacity) based contenders.

All Flash

When it comes to raw performance in the form of IOPS, not much can beat a fully equipped all flash storage array. As the name suggests, these arrays are equipped with solid state storage only and, as such, can provide tens of thousands, hundreds of thousands, and even millions of IOPS in a single shelf.

Perhaps the most significant challenge in these all flash systems is capacity, since flash has yet to catch up with spinning disk when it comes to plummeting $/GB. However, all flash solutions are generally intended to address situations in which $/GB may not be the primary metric. Further, vendors in the space have gone to great pains to include comprehensive data reduction features, such as compression and deduplication, so that they can provide customers with great performance and reasonable capacity.

Hybrid: Flash Storage And Rotational Storage Combined

For organizations that may need some better performance from storage but that don’t need all flash and that want to retain spinning disk capacity benefits, hybrid storage has emerged as a way to get the best of both worlds. Hybrid storage is a conglomeration of both flash storage and spinning disk. In these arrays, the flash storage can act as both a very fast storage tier as well as a massive cache that the system can use to accelerate the spinning disk. These systems provide an approach that balances both $/GB and $/IOPS.

Summary

Flash storage is a rapidly evolving market with new innovations hitting all the time, from new kinds of flash storage itself to new packaging types that are intended to solve emerging problems. Don’t expect the flash market to slow down anytime soon, though. And, make sure to understand the facts and the fiction about the technology so that you can make the best possible decision for your specific needs.